AI Literacy Is Everywhere. Decision Architecture Is Not.

Most AI education for leaders focuses on technology literacy, trend briefings, or high-level risk checklists. It explains how models work, showcases emerging use cases, or outlines governance principles. And almost all of it is designed for large enterprise audiences — assuming dedicated AI teams, large budgets, and established governance infrastructure. What it never addresses is the harder question facing the leader of a 10–250 person organisation today: how do you make AI strategy, governance, vendor, and team-adoption calls when there is no CHRO, no CIO, no procurement function, and no consulting budget — and the next decision is on your desk this week?

As AI capabilities commoditise, technical access is no longer the differentiator. Judgment is. The lean organisations that outperform will not be those with “more AI,” but those whose leaders make better decisions about it — faster, with less to lean on, and with consequences that land harder when they get it wrong. Founders, executive directors, principals, partners, and small leadership teams are not looking for another course or certification. They need something fundamentally different: a private, self-paced, continuously updated system that helps them think clearly about AI’s impact on the choices they actually make — in real time, as the landscape evolves.

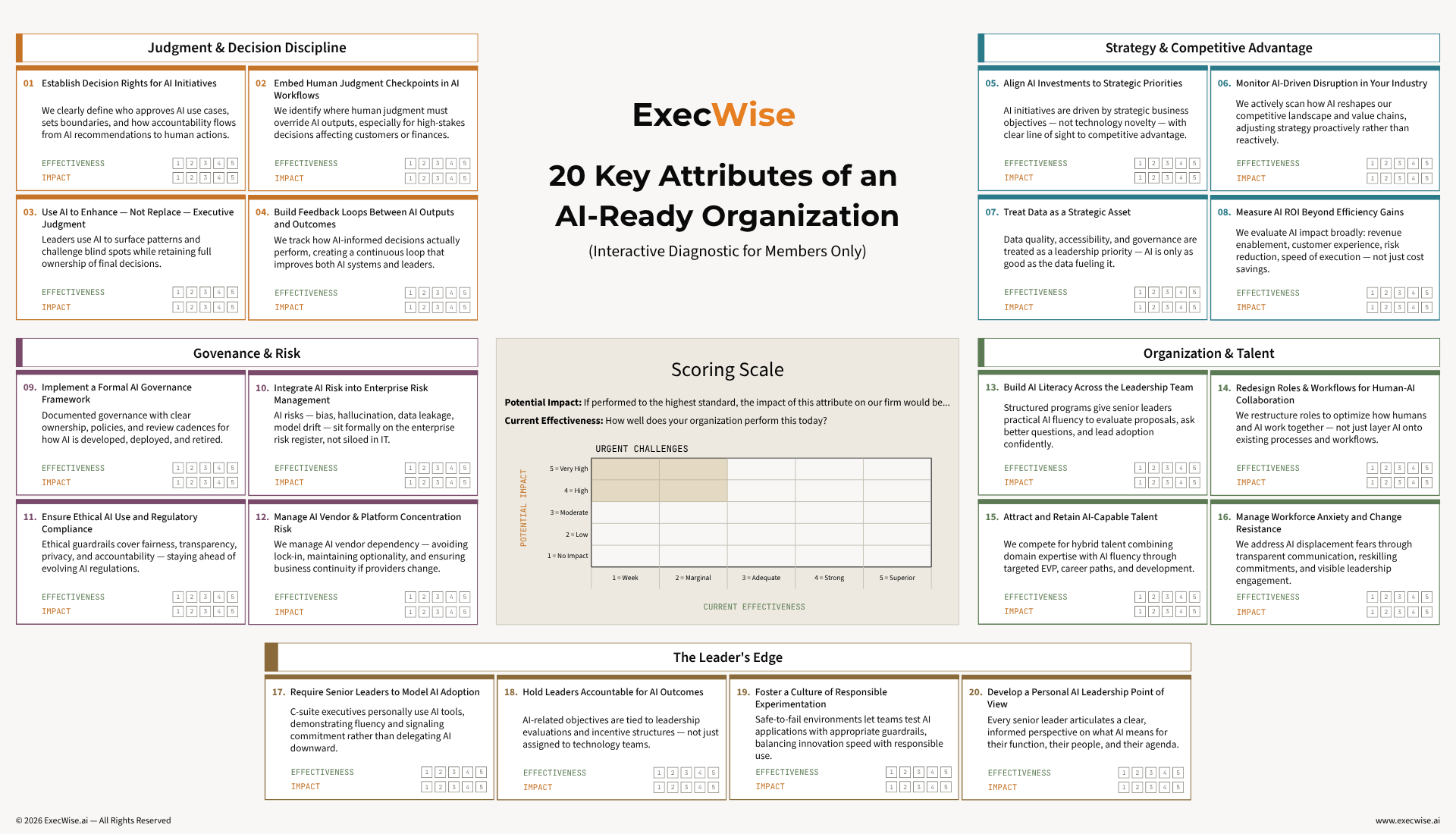

ExecWise exists to operate at that altitude. It is not a content library, a hype digest, or a workforce learning platform. It is a structured decision operating system built for the leader who is also the board, the CHRO, the CFO, and the IT department. Every topic is built around real lean-org tensions — speed versus oversight, automation versus dissent, adoption versus accountability — and translated into concrete mechanisms: how to make AI tool decisions you can defend, where human override actually matters, what your stakeholders should be asking, and how to respond when something goes wrong without an enterprise crisis function to lean on.

The goal is not to make leaders more informed about AI. It is to make them more disciplined in how they decide about it.